High-performance computing (HPC) clusters come in a variety of shapes and sizes, depending on the scale of the problems you’re working on, the number of different people using the cluster, and what kinds of resources they need to use.

However, it’s often not clear what kinds of differences separate the kind of cluster you might build for your small research team:

From the kind of cluster that might serve a large laboratory with many different researchers:

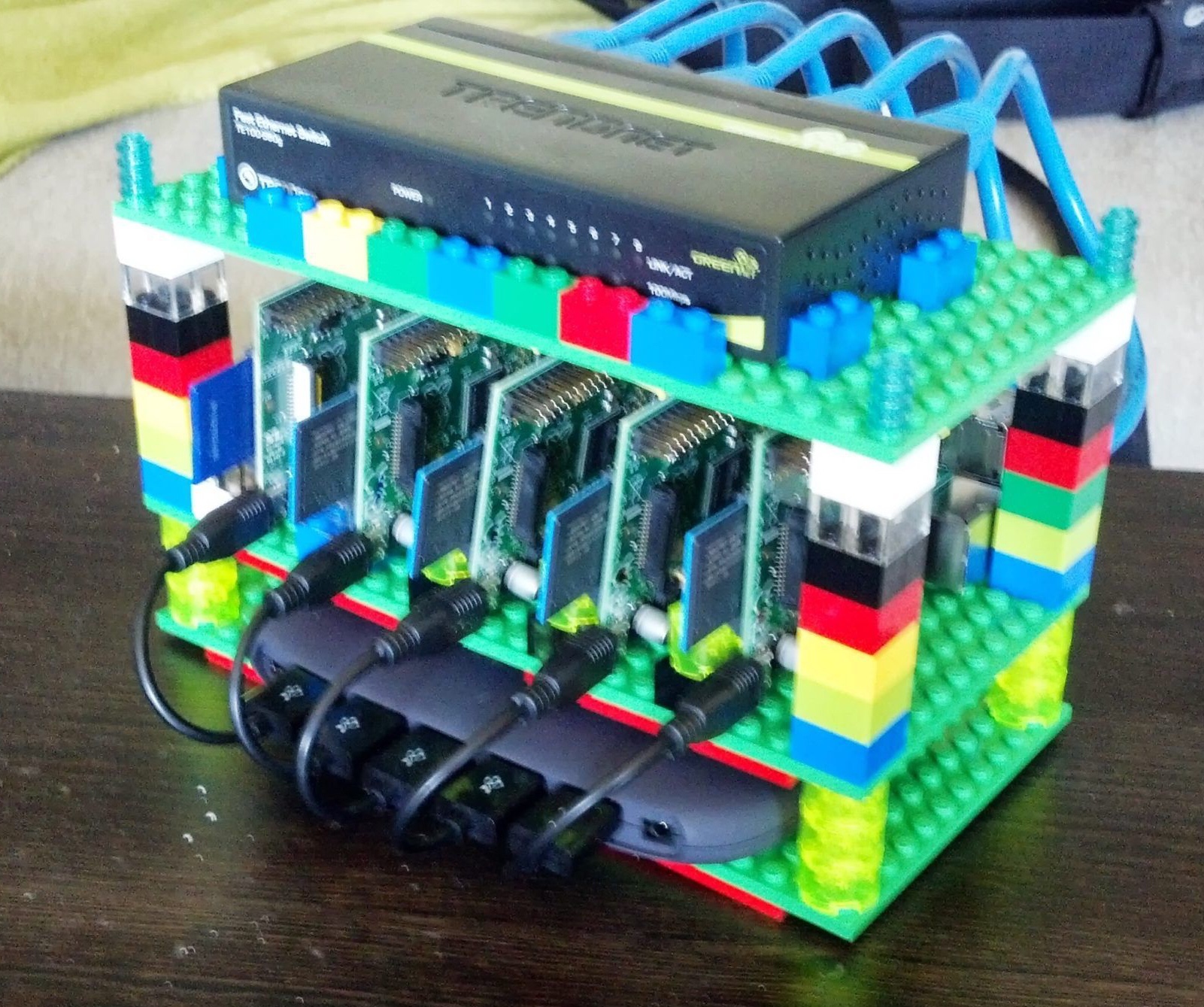

There are lots of differences between a supercomputer and my toy Raspberry Pi cluster, but also a lot in common. From a management perspective, a big part of the difference is how many different specialized node types you might find in the larger system.

Continue reading